GPU Compute

Kickstart and accelerate your AI workloads with early access to the latest NVIDIA GPUs.

Get Started

AI-native Workloads

Visionbay is an AI-native supercomputing platform, purpose-built for AI era. It e integrates next-generation infrastructure, AI-ready tools and workflows, and AI expert operations to support the world's most demanding AI workloads-delivering performance, stability, and transparency at scale.Accelerate your AI deployment and go to market faster with a Visionbay.

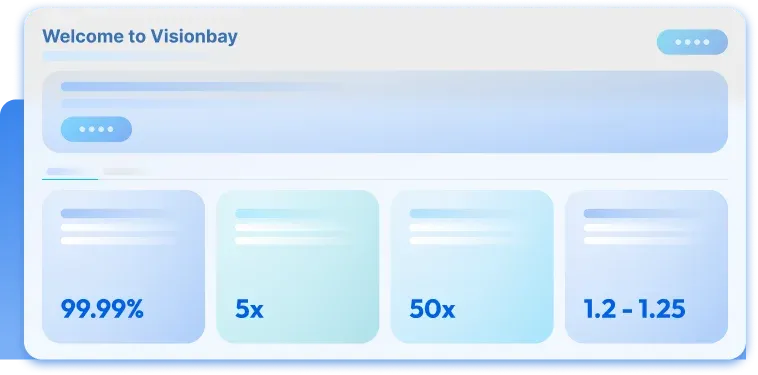

Deliver Real Time Reliability and Data Security

Uptime Tier III compliant AI data center verified by L5 Commissioning

99.99%

Get to Market Faster

Improvement in throughput per megawatt(Blackwell vs. Hopper Architecture)

5x

Business Revenue Potential

Leap in overall AI factory output(Blackwell vs. Hopper Architecture)

50x

World-class PUE Efficiency

sets a new benchmark

1.2 - 1.25

Discover How we Power your AI Workloads

Our GPU Portfolio

Powered by NVIDIA's latest accelerated computing platform, delivering scalable performance for AI and HPC workloads.

AI-Native Cloud Experience

Built for seamless deployment, scalable performance, and continuous operations.

Thousands of GPUs in One Cluster

Built for large-scale GPU clusters, supporting scalable and high-performance workloads.

Fully Managed Operations

End-to-end managed operations for reliable system performance.

Dedicated Expert Support

Access dedicated AI and cloud experts when you need them, supporting your projects from deployment to optimization.

Ready-to-go Solutions & Tools

Powered by NVIDIA's latest accelerated computing platform delivering scalable performance for AI and HPC workloads.

NVIDIA

GB300 NVL72

- 72x NVIDIA Blackwell Ultra GPUsGB200 with 279GB of HBM3e memory

- 36x NVIDIA Grace CPU with 2,592 Arm® Neoverse™ V2 cores

- Up to 17 TB LPDDR5X

- 130 Tbit/s InfiniBand

- Ubuntu 24.04 LTS for NVIDIA® GPUs (CUDA® 12)

NVIDIA DGX™

GB200 NVL72

- 72x NVIDIA Blackwell GPUs with a total of 186GB HBM3e memory

- 36x NVIDIA Grace CPUs with 2,592 Arm® Neoverse™ V2 cores

- Up to 17 TB LPDDR5X

- 28.8 Tbit/s InfiniBand

- NVIDIA DGX OS / Ubuntu 24.04 LTS for NVIDIA® GPUs (CUDA® 12)

- 72x NVIDIA Blackwell Ultra GPUsGB200 with 279GB of HBM3e memory

- 36x NVIDIA Grace CPU with 2,592 Arm® Neoverse™ V2 cores

- Up to 17 TB LPDDR5X

- 130 Tbit/s InfiniBand

- Ubuntu 24.04 LTS for NVIDIA® GPUs (CUDA® 12)

Setting a new standard with NVIDIA GB300 NVL72

NVIDIA GB300 NVL72 on Visionbay delivers an unprecedented 50x increase in output for AI inference workloads, enabling rapid deployment of larger, more sophisticated AI models when compared to the NVIDIA Hopper platform.

Next-level AI reasoning performance

NVIDIA GB300 NVL72 delivers up to 10x improved user responsiveness, 5x greater throughput per watt, and 1.5x more NVFP4 dense compute compared to previous-generation architectures.

Expanded GPU memory

With up to 20TB of HBM3e high-bandwidth GPU memory per rack, the GB300 NVL72 enables larger batch sizes and handles complex, memory-intensive AI models such as reasoning models, more efficiently.

Next-generation connectivity

Featuring fifth-generation NVIDIA NVLink™ technology with 130TB/s aggregate bandwidth, paired with NVIDIA Quantum-X800 InfiniBand switches and ConnectX-8 SuperNICs for dedicated 800 Gb/s connectivity per GPU, ensuring seamless GPU-to-GPU communication and maximum efficiency.

Self-service Console

Observability & Monitoring